Best Practices: 8 Mozenda Scraper Tools Every User Should Know About

November 01, 2016

Capturing data from a website isn’t always easy, but you should always be aware of the tools you have at your disposal. Since Mozenda started in 2007, we’ve made a lot of improvements to our software to streamline this process as much as possible.

Based on ongoing customer feedback to Mozenda support, we’ve compiled a list of the most useful techniques and tools that set us apart from the competition and make it simpler to get the data you need.

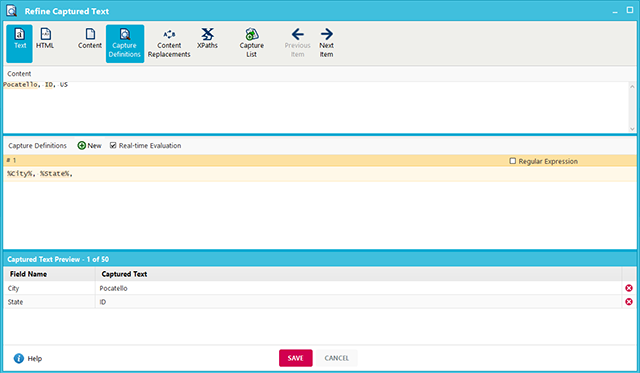

- Refine Captured Text. This window allows you to use several tools to filter captured data.After selecting an element containing text, use the Capture Definitions tool to select only the data you need. For advanced users, regular expressions can be used to find pattern-based data (such as zip codes, phone numbers, etc.).

The Content Replacements tool is essentially a “find and replace” function for an individual capture action. This can be used to replace entire words or remove unnecessary characters, making it easier to gather clean data up front.

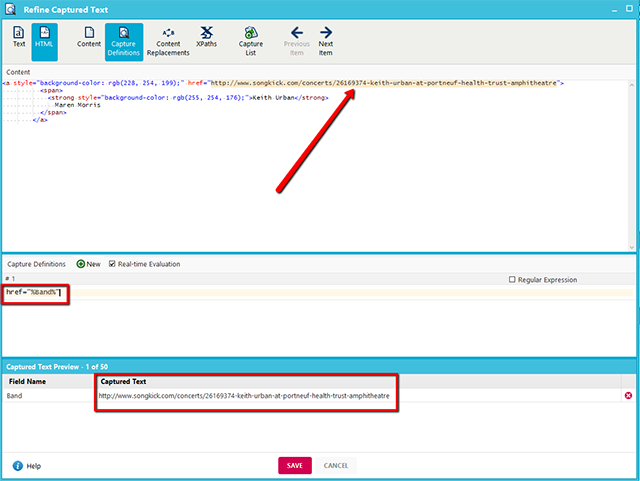

The Content Replacements tool is essentially a “find and replace” function for an individual capture action. This can be used to replace entire words or remove unnecessary characters, making it easier to gather clean data up front. - Directly load a URL without clicking. This method avoids the hassle of navigating through multiple click items on a website by loading a direct URL to the page with the data to be captured.This tool is useful not only for reducing the number of steps (and margin for error) while building an agent, but can also be useful for websites that use advanced navigation that don’t respond to a regular Click Item action. This method can be used in conjunction with the Refine Captured Text tool to extract a link URL followed by a Load Page action to directly load that URL into a new page. This process can be automated for a list of links using a parameter for the URL.

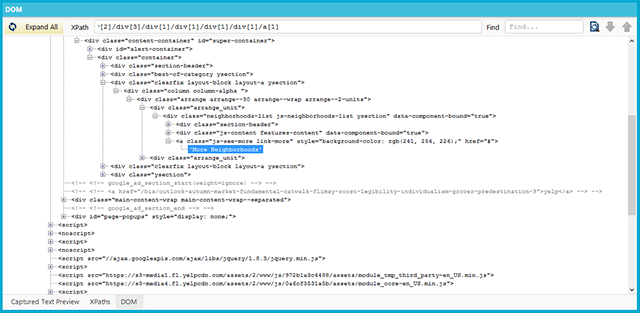

- Use the DOM window to pinpoint HTML elements. When using the default Mozenda capture actions, the correct element may not be selected due to the website’s structure. The DOM window displays a web page’s code in a hierarchical, folder-like format that allows you to track down the exact HTML element where the data exists.Once you find the correct element in the DOM window, you can recreate the capture action or adjust the XPath to match it. This method can also be used to collect hidden data that appears in the code but not on the rendered web page.

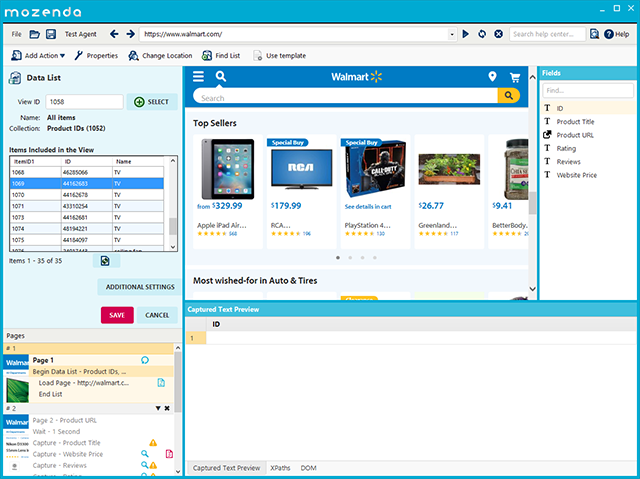

- Upload a list of data using the Input List function. The ability to upload a list of URLs, product numbers, or another input to be searched can easily be done using an Input List action. A list of zip codes, URLs, or any other list can be uploaded directly to Mozenda and the software will iterate through the list automatically.As seen in the image below, the user is uploading a list of states to go through in order to collect data for different store locations in all 50 states.

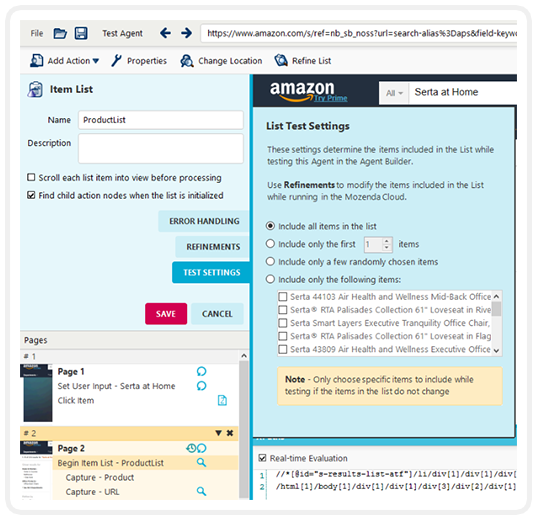

- Test an agent before processing. An essential part of building an agent is ensuring that it works as intended. The Agent Builder includes a testing option that will perform the agent’s actions and show a preview of the data captured without using any page credits.To perform a test using only specific items in a list, right-click the item list and select Test Settings. From here, the list can be adjusted to apply to a limited number of items, which will only affect the agent during testing.

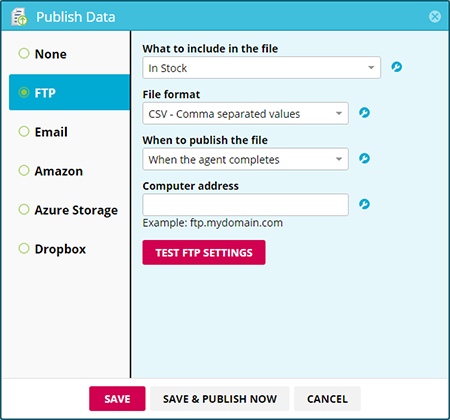

- Automate data delivery. After Mozenda captures a dataset, you can publish a file directly to email, Dropbox, Amazon S3, Microsoft Azure, or an external server via FTP. Publishing will occur after the agent runs and completes successfully.

For advanced users, the Mozenda API can be used to start an agent and publish the results on demand with additional parameters for agent settings, file destination, and more.

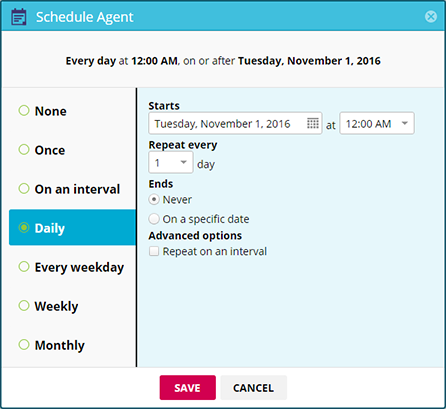

For advanced users, the Mozenda API can be used to start an agent and publish the results on demand with additional parameters for agent settings, file destination, and more. - Schedule agents to run automatically. Agents can be configured to run automatically without requiring the user to manually start the agent via the web console. An agent can be run several times a day, once week, once a month, and so on.

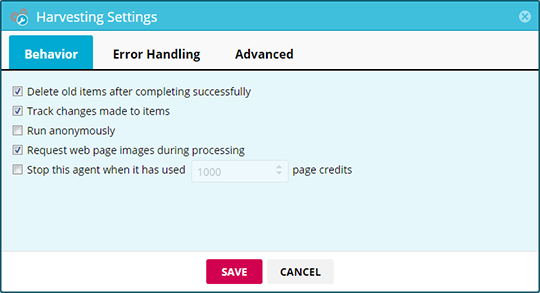

- Track changes in captured data. This feature keeps records of previous agent jobs and will show when items in a collection have been changed, added or removed. This automates the process of monitoring for updates to important data.

At this time, this feature is limited to reporting the fields that have changed between jobs. It does not indicate precise differences between old and new data.

At this time, this feature is limited to reporting the fields that have changed between jobs. It does not indicate precise differences between old and new data.

Have questions or comments? We’d love to hear from you. Call 801.995.4550 or email us at support@mozenda.com.