Website Scraping Use Cases from the Fortune 500

April 25, 2019

This is Kaleb Mangum, VP of Sales & Marketing at Mozenda. A few months back I shared some of the ways our Fortune 500 clients use website scraping to make competitive business decisions. I thought it would be a good idea to also post those use cases to our blog for all to benefit. If you’re more of an audible learner and would rather listen to these use cases, here is the link.

Website Scraping Use Case #1: Location Data

Whether you’re looking for leads, growth opportunities or market research, understanding your competitive landscape is essential. We’ve seen our Fortune 500 customers do this in a few different ways.

The first method is to scrape location-based data directly from websites that utilize a storefront finder feature. This may require you to input zip codes or navigate through multiple pages of results. Leveraging Mozenda will give you a comprehensive list of these physical businesses locations.

For example, AMSOIL uses Mozenda to build a searchable database of store locations that customers use to make in-store purchases. Every day, AMSOIL’s data team is doing routine website scrapes of specific geographical areas to understand when new mechanic shops or car part stores appear. When a new shop pops up, so does a new pool of sales prospects for AMSOIL. As AMSOIL’s data team discovers new store locations, they add that data to their database. In addition to location data, AMSOIL also provides their customers with their partner’s store phone numbers, addresses, and product lists directly on the AMSOIL site, making it extremely easy for their customers to make purchasing decisions.

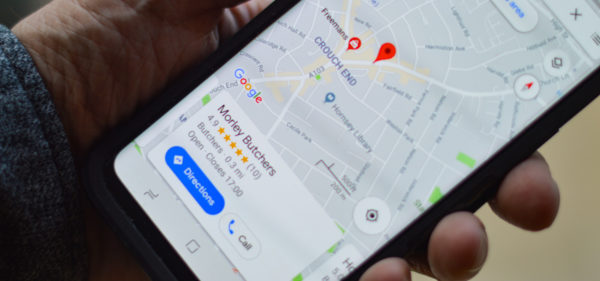

The second location data harvesting method is to scrape large aggregated sites that are niche specific to your industry. Think yellowpages.com or yelp.com; whatever website houses data that could help you understand the market footprint in specific geographical areas. What data do you need to identify market saturation and expansion opportunities? In most cases, that data is already aggregated online; all you need to do is harvest that data in a schema that will help you make pivotal business decisions. In the use case of our Fortune 500 clients, this location-specific data gives them the confidence to make sound decisions such as opening new storefronts or closing under-serving operations.

Some of the data that our clients pull to make these decisions are:

- Competitors: the number of competitor operations in specific geolocations

- Ratings: competitor customer ratings in specific geolocations

- Prices: competitor prices in specific geolocations

Website Scraping Use Case #2: Product Data

We know how necessary it is to gather, update and publish the most current product data to your customers. If you’re a retailer, manufacturer or distributor, you have your eyes on many different data points. We also know that these data points live in different silos. Product description data might live in one area while product images and media live in another, and product reviews live in yet another. At some point, these data points come together in one common layout where it can be extracted. We have many clients who extract this data from their own websites because they understand that their own site is where they can get the most updated aggregated data on their products. For these Mozenda customers, it’s easier to pull product data from the HTML of their live site than from the multiple silos that this data lives within their organization.

Retailers are also often times frustrated that their manufacturer or distributor can’t provide current product specs or images in a schema that can be directly uploaded to their consumer website. However, manufacturers will often store this valuable data on their own site presenting another opportunity where using a website scraping tool to gather data will benefit you, your organization, and your consumers.

Adversely, if you’re a manufacturer you may want to make sure your retailers and distributors are using the most current product details which you provided for them online. This is another use case where some of our Fortune 500 customers have used Mozenda to set up routine scrapes that pull data from their retailer’s site then cross-reference that data with their own to make sure things look accurate.

Website Scraping Use Case #3: Price-Monitoring

Price-monitoring is a common way of seeing where you stand in a competitive landscape. Our customers use Mozenda to harvest pricing data in three ways:

- Location pricing: To gain confidence in their location pricing policies

- Competitive pricing: To gain confidence that their pricing is competitive

- Audit distributors: To audit their distributors or partner resellers pricing to make sure they are adhering to their contractual obligations.

For a web harvesting project like this, it’s critical to know the problem your newfound data will solve. (You can read how our most successful customers write problem statements for their web harvesting projects here). Having a problem statement before starting this type of web harvesting project will guide you in gathering accurate data fields.

For example, your problem statement might require you to harvest product assortment data to help you understand how many products a competitor might sell and at what cost. Or, your problem statement might require you to load individual product descriptions to help you understand what your competitor is selling and at what cost. These two different problem statements require two different strategies and data schemas. Knowing your problem statement ahead of time will help you get data faster and implement a strategy that can be easily pivoted rather than having to scrap the project and start again with a new game plan.

Website Scraping Use Case #4: Customer Sentiment

Customer sentiment and reviewer information is critical in today’s environment. The internet is now an established forum for customers to voice their feedback whether it’s positive or negative. Therefore, knowing what your customers are saying in a timely manner is an essential practice.

A household commercial appliance company came to us with a problem statement that their customers were no longer providing product feedback through their established processes. This meant that their product engineers and quality assurance department weren’t getting the data they needed to be proactive. Instead, customers were going to multiple online platforms to share their feedback in an uncontrolled environment. Because of this, their customer service department could no longer interface with their customers privately to issue replacement products, discounts, or any other measure that would limit the exposure of a negative customer experience.

To solve their problem statement, this Mozenda customer set up routine web data scrapes to gather online reviews and comments on multiple forums and social media platforms. While these reviews were still public, it gave this appliance company real-time insights into product issues. This helped establish priorities within their company while also giving them a faster way of resolving negative customer experiences.

We’ve also seen our customers use Mozenda to scrape specific hashtags and keywords surrounding a new product release. This kind of data provides customer sentiment and insight into the dialog customers are having around a product.

The best part about this strategy is that it can be automated. There are two levels for gathering customer sentiment data:

- High-level strategy: scraping review data to gain a sense of the average product rating

- Detailed-level strategy: scraping the actual review content to do further sentiment analysis

The web scraping strategy you choose goes back to your problem statement. In the case of our Fortune 500 customers, many departments within their organization have overlapping challenges where website scraping has become a part of their solution. As one department within their organization implements these web harvesting strategies and begins to share their insights, others within their company take note and utilize website scraping for their own problem statements. This has been an opportunity for cross-department collaboration within our customers’ organizations. Often times, the data that you need is also worthwhile to another department.

No matter where you are in your data extraction strategy, we’re here to help you discover your next step. We have multiple solutions and services that are trusted and backed by ⅓ of the Fortune 500. The use cases for website scraping are limitless. We can help you develop the right use case for your industry and your data needs. You can learn more about our Software solutions and Service solutions below.

Learn More About Web Scraping Software Learn More About Web Scraping Services

Best,

Kaleb Mangum

VP of Sales & Marketing at Mozenda.